metabase-query is a Python package designed to simplify data retrieval from Metabase, specifically focusing on the Card Query API and Dataset API. This package allows data professionals to execute queries using URLs or SQL directly within their Python code, facilitating streamlined access to Metabase data.

- Flexible Data Retrieval: Retrieve data in any format supported by Metabase, including JSON, CSV, and XLSX.

- Simple Integration: Execute queries by simply inputting the question URL and Metabase session—no need to manually provide parameters.

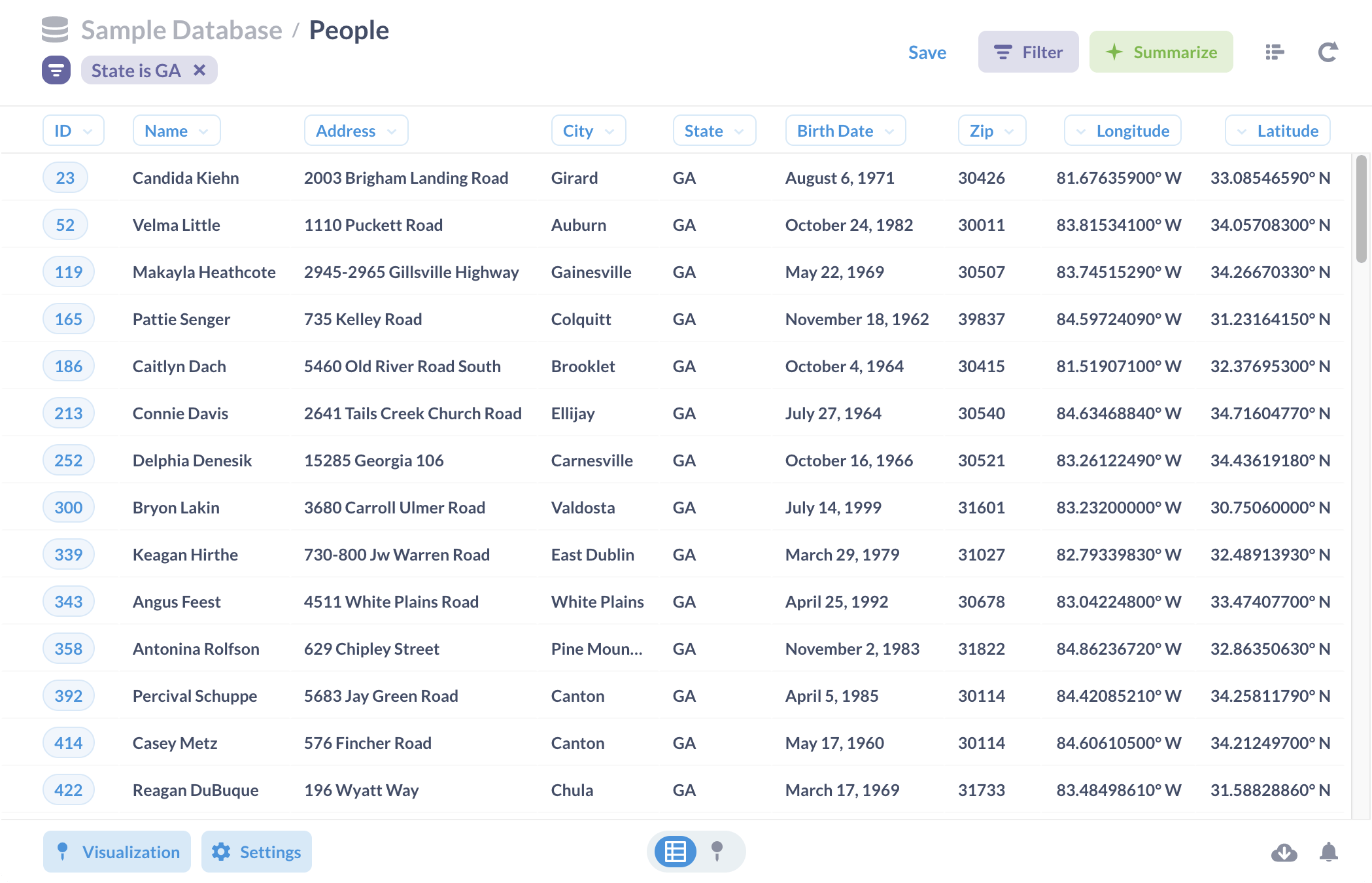

- Consistent Results: JSON results maintain the same column order as displayed in the Metabase UI.

- Session Management: Automatically checks the availability of the Metabase session.

- Effortless Filtering: Easily apply filters to your queries using simple dictionaries.

- Error Handling: Supports automatic retries in case of server errors or slowdowns.

- Bulk Filter Support: Allows entering multiple filter values in a single request.

- Query Versatility: Supports both saved and unsaved questions, as well as SQL queries.

- Utilize asynchronous libraries: Send multiple requests concurrently to retrieve data efficiently.

To install the package, use the following pip command:

pip install --upgrade metabase-queryInstall from GitHub:

pip install --upgrade git+https://github.com/tranngocminhhieu/metabase-query.gitfrom metabase_query import Metabase

# Initialize the MetabaseQuery object

mb = Metabase(metabase_session='YourMetabaseSession')

# Query data using a Metabase question URL

url = 'https://your-domain.com/question/123456-example?created_at=past3months'

data = mb.query(url=url, format='json')url = 'https://your-domain.com/question#eyJkYXRhc2V0X3F1ZXJ5Ijp7ImRhdGFiYXNlIjo2LCJxdWVyeSI6eyJzb3VyY2UtdGFibGUiOjQ4MzV9LCJ0eXBlIjoicXVlcnkifSwiZGlzcGxheSI6InRhYmxlIiwidmlzdWFsaXphdGlvbl9zZXR0aW5ncyI6e319'

data = mb.query(url=url, format='csv')

# Example saving data to a CSV file.

with open('data.csv', 'rb') as f:

f.write(data)mb = Metabase(metabase_session='YourMetabaseSession', retry_errors=None, retry_attempts=3, limit_per_host=5, timeout=600, verbose=True, domain=None)metabase_session: Your Metabase Session.retry_errors: Set toNoneto retry on any error, or provide a list of specific errors to retry only for those. Default isNone.retry_attempts: The number of retry attempts in case of an error. Default is3; set to0to disable retries.limit_per_host: The maximum number of connections allowed per host. Default is5.timeout: The timeout duration in seconds for each connection. Default is600.verbose: Whether to print logs. Default isTrue.domain: Not required for URL-based queries, but mandatory for SQL queries. Default isNone.

It will combine both filter in URL and filter dictionary. Priority filter dictionary if it exists on URL.

filter = {

'order_id': [123456, 456789, 789012], # UNLIMITED values!!!

'status': 'Completed'

}

data = mb.query(url=url, filter=filter, filter_chunk_size=5000)filter: A single dictionary or a list of dictionaries representing the filters.filter_chunk_size: For bulk filter values, the package will divide the values into manageable chunks for processing, then combine the results into a single dataset.

filters = [

{'created_at': '2024-08-01~2024-08-05'},

{'created_at': '2024-08-06~2024-08-10'},

{'created_at': '2024-08-11~2024-08-15'}

]

results = mb.query(url=url, filter=filters)

# Combine results if the datasets have the same columns

from metabase_query.utils import combine_results

data = combine_results(results=[r['data'] for r in results], format='json')It can use with a filter dictionary if needed.

urls = [

'https://your-domain.com/question/123456-example?created_at=2024-08-01~2024-08-05',

'https://your-domain.com/question/123456-example?created_at=2024-08-06~2024-08-10',

'https://your-domain.com/question/123456-example?created_at=2024-08-11~2024-08-15'

]

results = mb.query(url=urls)urls = [

'https://your-domain.com/question/123456-example', # 1

'https://your-domain.com/question/123456-example', # 2

'https://your-domain.com/question/123456-example' # 3

]

filters = [

{'created_at': '2024-08-01~2024-08-05'}, # For URL 1

{'created_at': '2024-08-06~2024-08-10'}, # For URL 2

{'created_at': '2024-08-11~2024-08-15'} # For URL 3

]

results = mb.query(url=urls, filter=filters)sql = '''

SELECT * FROM your_table LIMIT 1000

'''

database = '1-presto' # or integer ID

data = mb.sql(sql=sql, database=database, format='json')sql: A single SQL query or a list of SQL queries.database: A single database ID or a list of database IDs corresponding to the SQL queries. Refer to the database slug in the browser for details.

sql_1 = '''

SELECT * FROM your_table WHERE created_at BETWEEN DATE '2024-08-01' AND '2024-08-05'

'''

sql_2 = '''

SELECT * FROM your_table WHERE created_at BETWEEN DATE '2024-08-06' AND '2024-08-10'

'''

sqls = [sql_1, sql_2]

results = mb.sql(sql=sqls, database=database)Contributions are welcome! Please refer to the issues page for ways you can help.

Good luck with your data queries!