diff --git a/.github/workflows/ccpp.yml b/.github/workflows/ccpp.yml

index dd8a98dd856..21ff07296fb 100644

--- a/.github/workflows/ccpp.yml

+++ b/.github/workflows/ccpp.yml

@@ -1,6 +1,10 @@

name: Darknet Continuous Integration

-on: [push, workflow_dispatch]

+on:

+ push:

+ workflow_dispatch:

+ schedule:

+ - cron: '0 0 * * *'

env:

VCPKG_BINARY_SOURCES: 'clear;nuget,vcpkgbinarycache,readwrite'

@@ -17,24 +21,13 @@ jobs:

run: sudo apt install libopencv-dev

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'LIBSO=1 GPU=0 CUDNN=0 OPENCV=0'

run: |

@@ -72,37 +65,26 @@ jobs:

make clean

- ubuntu-vcpkg-cuda:

+ ubuntu-vcpkg-opencv4-cuda:

runs-on: ubuntu-20.04

steps:

- uses: actions/checkout@v2

+ - uses: lukka/get-cmake@latest

+

- name: Update apt

run: sudo apt update

- name: Install dependencies

run: sudo apt install yasm nasm

- - uses: lukka/get-cmake@latest

-

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'Setup vcpkg and NuGet artifacts backend'

shell: bash

@@ -123,7 +105,7 @@ jobs:

CUDA_PATH: "/usr/local/cuda"

CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

- run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -DisableInteractive -DoNotUpdateDARKNET

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -DisableInteractive -DoNotUpdateDARKNET

- uses: actions/upload-artifact@v2

with:

@@ -143,6 +125,92 @@ jobs:

path: ${{ github.workspace }}/uselib*

+ ubuntu-vcpkg-opencv3-cuda:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: Update apt

+ run: sudo apt update

+ - name: Install dependencies

+ run: sudo apt install yasm nasm

+

+ - name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

+ run: |

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1)

+ setapikey ${{ secrets.BAGET_API_KEY }}

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Build'

+ shell: pwsh

+ env:

+ CUDACXX: "/usr/local/cuda/bin/nvcc"

+ CUDA_PATH: "/usr/local/cuda"

+ CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

+ LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -ForceOpenCVVersion 3 -DisableInteractive -DoNotUpdateDARKNET

+

+

+ ubuntu-vcpkg-opencv2-cuda:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: Update apt

+ run: sudo apt update

+ - name: Install dependencies

+ run: sudo apt install yasm nasm

+

+ - name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

+ run: |

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1)

+ setapikey ${{ secrets.BAGET_API_KEY }}

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Build'

+ shell: pwsh

+ env:

+ CUDACXX: "/usr/local/cuda/bin/nvcc"

+ CUDA_PATH: "/usr/local/cuda"

+ CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

+ LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -ForceOpenCVVersion 2 -DisableInteractive -DoNotUpdateDARKNET

+

+

ubuntu:

runs-on: ubuntu-20.04

steps:

@@ -195,24 +263,13 @@ jobs:

- uses: lukka/get-cmake@latest

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'Build'

shell: pwsh

@@ -221,7 +278,7 @@ jobs:

CUDA_PATH: "/usr/local/cuda"

CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

- run: ./build.ps1 -EnableOPENCV -EnableCUDA -DisableInteractive -DoNotUpdateDARKNET

+ run: ./build.ps1 -EnableOPENCV -EnableCUDA -EnableCUDNN -DisableInteractive -DoNotUpdateDARKNET

- uses: actions/upload-artifact@v2

with:

@@ -253,6 +310,28 @@ jobs:

run: ./build.ps1 -ForceCPP -DisableInteractive -DoNotUpdateDARKNET

+ ubuntu-setup-sh:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1)

+ setapikey ${{ secrets.BAGET_API_KEY }}

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Setup'

+ shell: bash

+ run: ./scripts/setup.sh -InstallCUDA -BypassDRIVER

+

+

osx-vcpkg:

runs-on: macos-latest

steps:

@@ -419,6 +498,28 @@ jobs:

path: ${{ github.workspace }}/uselib*

+ win-setup-ps1:

+ runs-on: windows-latest

+ steps:

+ - uses: actions/checkout@v2

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json ;

+ $(./vcpkg/vcpkg fetch nuget | tail -n 1)

+ setapikey ${{ secrets.BAGET_API_KEY }}

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Setup'

+ shell: pwsh

+ run: ./scripts/setup.ps1 -InstallCUDA

+

+

win-intlibs-cpp:

runs-on: windows-latest

steps:

@@ -431,6 +532,18 @@ jobs:

run: ./build.ps1 -ForceCPP -DisableInteractive -DoNotUpdateDARKNET

+ win-csharp:

+ runs-on: windows-latest

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: 'Build'

+ shell: pwsh

+ run: ./build.ps1 -EnableCSharpWrapper -DisableInteractive -DoNotUpdateDARKNET

+

+

win-intlibs-cuda:

runs-on: windows-latest

steps:

diff --git a/.github/workflows/on_pr.yml b/.github/workflows/on_pr.yml

index 198d84fc4e0..6b6aface5b8 100644

--- a/.github/workflows/on_pr.yml

+++ b/.github/workflows/on_pr.yml

@@ -17,24 +17,13 @@ jobs:

run: sudo apt install libopencv-dev

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'LIBSO=1 GPU=0 CUDNN=0 OPENCV=0'

run: |

@@ -72,37 +61,26 @@ jobs:

make clean

- ubuntu-vcpkg-cuda:

+ ubuntu-vcpkg-opencv4-cuda:

runs-on: ubuntu-20.04

steps:

- uses: actions/checkout@v2

+ - uses: lukka/get-cmake@latest

+

- name: Update apt

run: sudo apt update

- name: Install dependencies

run: sudo apt install yasm nasm

- - uses: lukka/get-cmake@latest

-

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'Setup vcpkg and NuGet artifacts backend'

shell: bash

@@ -120,7 +98,7 @@ jobs:

CUDA_PATH: "/usr/local/cuda"

CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

- run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -DisableInteractive -DoNotUpdateDARKNET

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -DisableInteractive -DoNotUpdateDARKNET

- uses: actions/upload-artifact@v2

with:

@@ -140,6 +118,86 @@ jobs:

path: ${{ github.workspace }}/uselib*

+ ubuntu-vcpkg-opencv3-cuda:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: Update apt

+ run: sudo apt update

+ - name: Install dependencies

+ run: sudo apt install yasm nasm

+

+ - name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

+ run: |

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Build'

+ shell: pwsh

+ env:

+ CUDACXX: "/usr/local/cuda/bin/nvcc"

+ CUDA_PATH: "/usr/local/cuda"

+ CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

+ LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -ForceOpenCVVersion 3 -DisableInteractive -DoNotUpdateDARKNET

+

+

+ ubuntu-vcpkg-opencv2-cuda:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: Update apt

+ run: sudo apt update

+ - name: Install dependencies

+ run: sudo apt install yasm nasm

+

+ - name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

+ run: |

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

+ sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Build'

+ shell: pwsh

+ env:

+ CUDACXX: "/usr/local/cuda/bin/nvcc"

+ CUDA_PATH: "/usr/local/cuda"

+ CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

+ LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

+ run: ./build.ps1 -UseVCPKG -DoNotUpdateVCPKG -EnableOPENCV -EnableCUDA -EnableCUDNN -ForceOpenCVVersion 2 -DisableInteractive -DoNotUpdateDARKNET

+

+

ubuntu:

runs-on: ubuntu-20.04

steps:

@@ -192,24 +250,13 @@ jobs:

- uses: lukka/get-cmake@latest

- name: 'Install CUDA'

+ run: ./scripts/deploy-cuda.sh

+

+ - name: 'Create softlinks for CUDA'

run: |

- sudo apt update

- sudo apt-get dist-upgrade -y

- sudo wget -O /etc/apt/preferences.d/cuda-repository-pin-600 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

- sudo apt-key adv --fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /"

- sudo add-apt-repository "deb http://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu2004/x86_64/ /"

- sudo apt-get install -y --no-install-recommends cuda-compiler-11-2 cuda-libraries-dev-11-2 cuda-driver-dev-11-2 cuda-cudart-dev-11-2

- sudo apt-get install -y --no-install-recommends libcudnn8-dev

- sudo rm -rf /usr/local/cuda

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/stubs/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so.1

sudo ln -s /usr/local/cuda-11.2/lib64/stubs/libcuda.so /usr/local/cuda-11.2/lib64/libcuda.so

- sudo ln -s /usr/local/cuda-11.2 /usr/local/cuda

- export PATH=/usr/local/cuda/bin:$PATH

- export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH

- nvcc --version

- gcc --version

- name: 'Build'

shell: pwsh

@@ -218,7 +265,7 @@ jobs:

CUDA_PATH: "/usr/local/cuda"

CUDA_TOOLKIT_ROOT_DIR: "/usr/local/cuda"

LD_LIBRARY_PATH: "/usr/local/cuda/lib64:/usr/local/cuda/lib64/stubs:$LD_LIBRARY_PATH"

- run: ./build.ps1 -EnableOPENCV -EnableCUDA -DisableInteractive -DoNotUpdateDARKNET

+ run: ./build.ps1 -EnableOPENCV -EnableCUDA -EnableCUDNN -DisableInteractive -DoNotUpdateDARKNET

- uses: actions/upload-artifact@v2

with:

@@ -250,6 +297,25 @@ jobs:

run: ./build.ps1 -ForceCPP -DisableInteractive -DoNotUpdateDARKNET

+ ubuntu-setup-sh:

+ runs-on: ubuntu-20.04

+ steps:

+ - uses: actions/checkout@v2

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ mono $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Setup'

+ shell: bash

+ run: ./scripts/setup.sh -InstallCUDA -BypassDRIVER

+

+

osx-vcpkg:

runs-on: macos-latest

steps:

@@ -410,6 +476,25 @@ jobs:

path: ${{ github.workspace }}/uselib*

+ win-setup-ps1:

+ runs-on: windows-latest

+ steps:

+ - uses: actions/checkout@v2

+

+ - name: 'Setup vcpkg and NuGet artifacts backend'

+ shell: bash

+ run: >

+ git clone https://github.com/microsoft/vcpkg ;

+ ./vcpkg/bootstrap-vcpkg.sh ;

+ $(./vcpkg/vcpkg fetch nuget | tail -n 1) sources add

+ -Name "vcpkgbinarycache"

+ -Source http://93.49.111.10:5555/v3/index.json

+

+ - name: 'Setup'

+ shell: pwsh

+ run: ./scripts/setup.ps1 -InstallCUDA

+

+

win-intlibs-cpp:

runs-on: windows-latest

steps:

@@ -422,6 +507,18 @@ jobs:

run: ./build.ps1 -ForceCPP -DisableInteractive -DoNotUpdateDARKNET

+ win-csharp:

+ runs-on: windows-latest

+ steps:

+ - uses: actions/checkout@v2

+

+ - uses: lukka/get-cmake@latest

+

+ - name: 'Build'

+ shell: pwsh

+ run: ./build.ps1 -EnableCSharpWrapper -DisableInteractive -DoNotUpdateDARKNET

+

+

win-intlibs-cuda:

runs-on: windows-latest

steps:

diff --git a/.gitignore b/.gitignore

index 174f0b5a378..916cfb88461 100644

--- a/.gitignore

+++ b/.gitignore

@@ -22,6 +22,8 @@ cfg/

temp/

build/darknet/*

build_*/

+ninja/

+ninja.zip

vcpkg_installed/

!build/darknet/YoloWrapper.cs

.fuse*

@@ -36,6 +38,7 @@ build/.ninja_deps

build/.ninja_log

build/Makefile

*/vcpkg-manifest-install.log

+build.log

# OS Generated #

.DS_Store*

diff --git a/.travis.yml b/.travis.yml

index 447a72a179d..f208498dbcd 100644

--- a/.travis.yml

+++ b/.travis.yml

@@ -16,32 +16,6 @@ matrix:

- additional_defines=" -DENABLE_CUDA=OFF -DENABLE_CUDNN=OFF -DENABLE_OPENCV=OFF"

- MATRIX_EVAL=""

- - os: osx

- compiler: gcc

- name: macOS - gcc (llvm backend) - opencv@2

- osx_image: xcode12.3

- env:

- - OpenCV_DIR="/usr/local/opt/opencv@2/"

- - additional_defines="-DOpenCV_DIR=${OpenCV_DIR} -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv@2"

-

- - os: osx

- compiler: gcc

- name: macOS - gcc (llvm backend) - opencv@3

- osx_image: xcode12.3

- env:

- - OpenCV_DIR="/usr/local/opt/opencv@3/"

- - additional_defines="-DOpenCV_DIR=${OpenCV_DIR} -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv@3"

-

- - os: osx

- compiler: gcc

- name: macOS - gcc (llvm backend) - opencv(latest)

- osx_image: xcode12.3

- env:

- - additional_defines=" -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv"

-

- os: osx

compiler: clang

name: macOS - clang

@@ -58,40 +32,6 @@ matrix:

- additional_defines="-DBUILD_AS_CPP:BOOL=TRUE -DENABLE_CUDA=OFF -DENABLE_CUDNN=OFF -DENABLE_OPENCV=OFF"

- MATRIX_EVAL=""

- - os: osx

- compiler: clang

- name: macOS - clang - opencv@2

- osx_image: xcode12.3

- env:

- - OpenCV_DIR="/usr/local/opt/opencv@2/"

- - additional_defines="-DOpenCV_DIR=${OpenCV_DIR} -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv@2"

-

- - os: osx

- compiler: clang

- name: macOS - clang - opencv@3

- osx_image: xcode12.3

- env:

- - OpenCV_DIR="/usr/local/opt/opencv@3/"

- - additional_defines="-DOpenCV_DIR=${OpenCV_DIR} -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv@3"

-

- - os: osx

- compiler: clang

- name: macOS - clang - opencv(latest)

- osx_image: xcode12.3

- env:

- - additional_defines=" -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv"

-

- - os: osx

- compiler: clang

- name: macOS - clang - opencv(latest) - libomp

- osx_image: xcode12.3

- env:

- - additional_defines=" -DENABLE_CUDA=OFF"

- - MATRIX_EVAL="brew install opencv libomp"

-

- os: linux

compiler: clang

dist: bionic

diff --git a/CMakeLists.txt b/CMakeLists.txt

index 0029abe78ee..0e1abf32d9c 100644

--- a/CMakeLists.txt

+++ b/CMakeLists.txt

@@ -7,6 +7,8 @@ set(Darknet_PATCH_VERSION 5)

set(Darknet_TWEAK_VERSION 4)

set(Darknet_VERSION ${Darknet_MAJOR_VERSION}.${Darknet_MINOR_VERSION}.${Darknet_PATCH_VERSION}.${Darknet_TWEAK_VERSION})

+message("Darknet_VERSION: ${Darknet_VERSION}")

+

option(CMAKE_VERBOSE_MAKEFILE "Create verbose makefile" ON)

option(CUDA_VERBOSE_BUILD "Create verbose CUDA build" ON)

option(BUILD_SHARED_LIBS "Create dark as a shared library" ON)

@@ -19,20 +21,50 @@ option(ENABLE_CUDNN "Enable CUDNN" ON)

option(ENABLE_CUDNN_HALF "Enable CUDNN Half precision" ON)

option(ENABLE_ZED_CAMERA "Enable ZED Camera support" ON)

option(ENABLE_VCPKG_INTEGRATION "Enable VCPKG integration" ON)

+option(ENABLE_CSHARP_WRAPPER "Enable building a csharp wrapper" OFF)

option(VCPKG_BUILD_OPENCV_WITH_CUDA "Build OpenCV with CUDA extension integration" ON)

+option(VCPKG_USE_OPENCV2 "Use legacy OpenCV 2" OFF)

+option(VCPKG_USE_OPENCV3 "Use legacy OpenCV 3" OFF)

+option(VCPKG_USE_OPENCV4 "Use OpenCV 4" ON)

-if(VCPKG_BUILD_OPENCV_WITH_CUDA AND NOT APPLE)

- list(APPEND VCPKG_MANIFEST_FEATURES "opencv-cuda")

+if(VCPKG_USE_OPENCV4 AND VCPKG_USE_OPENCV2)

+ message(STATUS "You required vcpkg feature related to OpenCV 2 but forgot to turn off those for OpenCV 4, doing that for you")

+ set(VCPKG_USE_OPENCV4 OFF CACHE BOOL "Use OpenCV 4" FORCE)

+endif()

+if(VCPKG_USE_OPENCV4 AND VCPKG_USE_OPENCV3)

+ message(STATUS "You required vcpkg feature related to OpenCV 3 but forgot to turn off those for OpenCV 4, doing that for you")

+ set(VCPKG_USE_OPENCV4 OFF CACHE BOOL "Use OpenCV 4" FORCE)

+endif()

+if(VCPKG_USE_OPENCV2 AND VCPKG_USE_OPENCV3)

+ message(STATUS "You required vcpkg features related to both OpenCV 2 and OpenCV 3. Impossible to satisfy, keeping only OpenCV 3")

+ set(VCPKG_USE_OPENCV2 OFF CACHE BOOL "Use legacy OpenCV 2" FORCE)

endif()

+

if(ENABLE_CUDA AND NOT APPLE)

list(APPEND VCPKG_MANIFEST_FEATURES "cuda")

endif()

-if(ENABLE_OPENCV)

- list(APPEND VCPKG_MANIFEST_FEATURES "opencv-base")

-endif()

if(ENABLE_CUDNN AND ENABLE_CUDA AND NOT APPLE)

list(APPEND VCPKG_MANIFEST_FEATURES "cudnn")

endif()

+if(ENABLE_OPENCV)

+ if(VCPKG_BUILD_OPENCV_WITH_CUDA AND NOT APPLE)

+ if(VCPKG_USE_OPENCV4)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv-cuda")

+ elseif(VCPKG_USE_OPENCV3)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv3-cuda")

+ elseif(VCPKG_USE_OPENCV2)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv2-cuda")

+ endif()

+ else()

+ if(VCPKG_USE_OPENCV4)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv-base")

+ elseif(VCPKG_USE_OPENCV3)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv3-base")

+ elseif(VCPKG_USE_OPENCV2)

+ list(APPEND VCPKG_MANIFEST_FEATURES "opencv2-base")

+ endif()

+ endif()

+endif()

if(NOT CMAKE_HOST_SYSTEM_PROCESSOR AND NOT WIN32)

execute_process(COMMAND "uname" "-m" OUTPUT_VARIABLE CMAKE_HOST_SYSTEM_PROCESSOR OUTPUT_STRIP_TRAILING_WHITESPACE)

@@ -235,17 +267,19 @@ set(CMAKE_CXX_FLAGS "${ADDITIONAL_CXX_FLAGS} ${SHAREDLIB_CXX_FLAGS} ${CMAKE_CXX_

set(CMAKE_C_FLAGS "${ADDITIONAL_C_FLAGS} ${SHAREDLIB_C_FLAGS} ${CMAKE_C_FLAGS}")

if(OpenCV_FOUND)

- if(ENABLE_CUDA AND NOT OpenCV_CUDA_VERSION)

- set(BUILD_USELIB_TRACK "FALSE" CACHE BOOL "Build uselib_track" FORCE)

- message(STATUS " -> darknet is fine for now, but uselib_track has been disabled!")

- message(STATUS " -> Please rebuild OpenCV from sources with CUDA support to enable it")

- elseif(ENABLE_CUDA AND OpenCV_CUDA_VERSION)

+ if(ENABLE_CUDA AND OpenCV_CUDA_VERSION)

if(TARGET opencv_cudaoptflow)

list(APPEND OpenCV_LINKED_COMPONENTS "opencv_cudaoptflow")

endif()

if(TARGET opencv_cudaimgproc)

list(APPEND OpenCV_LINKED_COMPONENTS "opencv_cudaimgproc")

endif()

+ elseif(ENABLE_CUDA AND NOT OpenCV_CUDA_VERSION)

+ set(BUILD_USELIB_TRACK "FALSE" CACHE BOOL "Build uselib_track" FORCE)

+ message(STATUS " -> darknet is fine for now, but uselib_track has been disabled!")

+ message(STATUS " -> Please rebuild OpenCV from sources with CUDA support to enable it")

+ else()

+ set(BUILD_USELIB_TRACK "FALSE" CACHE BOOL "Build uselib_track" FORCE)

endif()

endif()

@@ -543,3 +577,7 @@ install(FILES

"${PROJECT_BINARY_DIR}/DarknetConfigVersion.cmake"

DESTINATION "${INSTALL_CMAKE_DIR}"

)

+

+if(ENABLE_CSHARP_WRAPPER)

+ add_subdirectory(src/csharp)

+endif()

diff --git a/README.md b/README.md

index 4a4a67f49f8..337d095d44f 100644

--- a/README.md

+++ b/README.md

@@ -6,16 +6,18 @@ Paper YOLO v4: https://arxiv.org/abs/2004.10934

Paper Scaled YOLO v4: https://arxiv.org/abs/2011.08036 use to reproduce results: [ScaledYOLOv4](https://github.com/WongKinYiu/ScaledYOLOv4)

-More details in articles on medium:

- * [Scaled_YOLOv4](https://alexeyab84.medium.com/scaled-yolo-v4-is-the-best-neural-network-for-object-detection-on-ms-coco-dataset-39dfa22fa982?source=friends_link&sk=c8553bfed861b1a7932f739d26f487c8)

- * [YOLOv4](https://medium.com/@alexeyab84/yolov4-the-most-accurate-real-time-neural-network-on-ms-coco-dataset-73adfd3602fe?source=friends_link&sk=6039748846bbcf1d960c3061542591d7)

+More details in articles on medium:

+

+- [Scaled_YOLOv4](https://alexeyab84.medium.com/scaled-yolo-v4-is-the-best-neural-network-for-object-detection-on-ms-coco-dataset-39dfa22fa982?source=friends_link&sk=c8553bfed861b1a7932f739d26f487c8)

+- [YOLOv4](https://medium.com/@alexeyab84/yolov4-the-most-accurate-real-time-neural-network-on-ms-coco-dataset-73adfd3602fe?source=friends_link&sk=6039748846bbcf1d960c3061542591d7)

Manual: https://github.com/AlexeyAB/darknet/wiki

-Discussion:

- - [Reddit](https://www.reddit.com/r/MachineLearning/comments/gydxzd/p_yolov4_the_most_accurate_realtime_neural/)

- - [Google-groups](https://groups.google.com/forum/#!forum/darknet)

- - [Discord](https://discord.gg/zSq8rtW)

+Discussion:

+

+- [Reddit](https://www.reddit.com/r/MachineLearning/comments/gydxzd/p_yolov4_the_most_accurate_realtime_neural/)

+- [Google-groups](https://groups.google.com/forum/#!forum/darknet)

+- [Discord](https://discord.gg/zSq8rtW)

About Darknet framework: http://pjreddie.com/darknet/

@@ -29,73 +31,72 @@ About Darknet framework: http://pjreddie.com/darknet/

[](https://colab.research.google.com/drive/12QusaaRj_lUwCGDvQNfICpa7kA7_a2dE)

[](https://colab.research.google.com/drive/1_GdoqCJWXsChrOiY8sZMr_zbr_fH-0Fg)

-

-* [YOLOv4 model zoo](https://github.com/AlexeyAB/darknet/wiki/YOLOv4-model-zoo)

-* [Requirements (and how to install dependencies)](#requirements)

-* [Pre-trained models](#pre-trained-models)

-* [FAQ - frequently asked questions](https://github.com/AlexeyAB/darknet/wiki/FAQ---frequently-asked-questions)

-* [Explanations in issues](https://github.com/AlexeyAB/darknet/issues?q=is%3Aopen+is%3Aissue+label%3AExplanations)

-* [Yolo v4 in other frameworks (TensorRT, TensorFlow, PyTorch, OpenVINO, OpenCV-dnn, TVM,...)](#yolo-v4-in-other-frameworks)

-* [Datasets](#datasets)

+- [YOLOv4 model zoo](https://github.com/AlexeyAB/darknet/wiki/YOLOv4-model-zoo)

+- [Requirements (and how to install dependencies)](#requirements)

+- [Pre-trained models](#pre-trained-models)

+- [FAQ - frequently asked questions](https://github.com/AlexeyAB/darknet/wiki/FAQ---frequently-asked-questions)

+- [Explanations in issues](https://github.com/AlexeyAB/darknet/issues?q=is%3Aopen+is%3Aissue+label%3AExplanations)

+- [Yolo v4 in other frameworks (TensorRT, TensorFlow, PyTorch, OpenVINO, OpenCV-dnn, TVM,...)](#yolo-v4-in-other-frameworks)

+- [Datasets](#datasets)

- [Yolo v4, v3 and v2 for Windows and Linux](#yolo-v4-v3-and-v2-for-windows-and-linux)

- [(neural networks for object detection)](#neural-networks-for-object-detection)

- - [GeForce RTX 2080 Ti:](#geforce-rtx-2080-ti)

+ - [GeForce RTX 2080 Ti](#geforce-rtx-2080-ti)

- [Youtube video of results](#youtube-video-of-results)

- [How to evaluate AP of YOLOv4 on the MS COCO evaluation server](#how-to-evaluate-ap-of-yolov4-on-the-ms-coco-evaluation-server)

- [How to evaluate FPS of YOLOv4 on GPU](#how-to-evaluate-fps-of-yolov4-on-gpu)

- [Pre-trained models](#pre-trained-models)

- - [Requirements](#requirements)

+ - [Requirements for Windows, Linux and macOS](#requirements-for-windows-linux-and-macos)

- [Yolo v4 in other frameworks](#yolo-v4-in-other-frameworks)

- [Datasets](#datasets)

- [Improvements in this repository](#improvements-in-this-repository)

- [How to use on the command line](#how-to-use-on-the-command-line)

- [For using network video-camera mjpeg-stream with any Android smartphone](#for-using-network-video-camera-mjpeg-stream-with-any-android-smartphone)

- [How to compile on Linux/macOS (using `CMake`)](#how-to-compile-on-linuxmacos-using-cmake)

- - [Using `vcpkg`](#using-vcpkg)

- - [Using libraries manually provided](#using-libraries-manually-provided)

+ - [Using also PowerShell](#using-also-powershell)

- [How to compile on Linux (using `make`)](#how-to-compile-on-linux-using-make)

- [How to compile on Windows (using `CMake`)](#how-to-compile-on-windows-using-cmake)

- [How to compile on Windows (using `vcpkg`)](#how-to-compile-on-windows-using-vcpkg)

- [How to train with multi-GPU](#how-to-train-with-multi-gpu)

- [How to train (to detect your custom objects)](#how-to-train-to-detect-your-custom-objects)

- - [How to train tiny-yolo (to detect your custom objects):](#how-to-train-tiny-yolo-to-detect-your-custom-objects)

- - [When should I stop training:](#when-should-i-stop-training)

- - [Custom object detection:](#custom-object-detection)

- - [How to improve object detection:](#how-to-improve-object-detection)

- - [How to mark bounded boxes of objects and create annotation files:](#how-to-mark-bounded-boxes-of-objects-and-create-annotation-files)

+ - [How to train tiny-yolo (to detect your custom objects)](#how-to-train-tiny-yolo-to-detect-your-custom-objects)

+ - [When should I stop training](#when-should-i-stop-training)

+ - [Custom object detection](#custom-object-detection)

+ - [How to improve object detection](#how-to-improve-object-detection)

+ - [How to mark bounded boxes of objects and create annotation files](#how-to-mark-bounded-boxes-of-objects-and-create-annotation-files)

- [How to use Yolo as DLL and SO libraries](#how-to-use-yolo-as-dll-and-so-libraries)

-

+

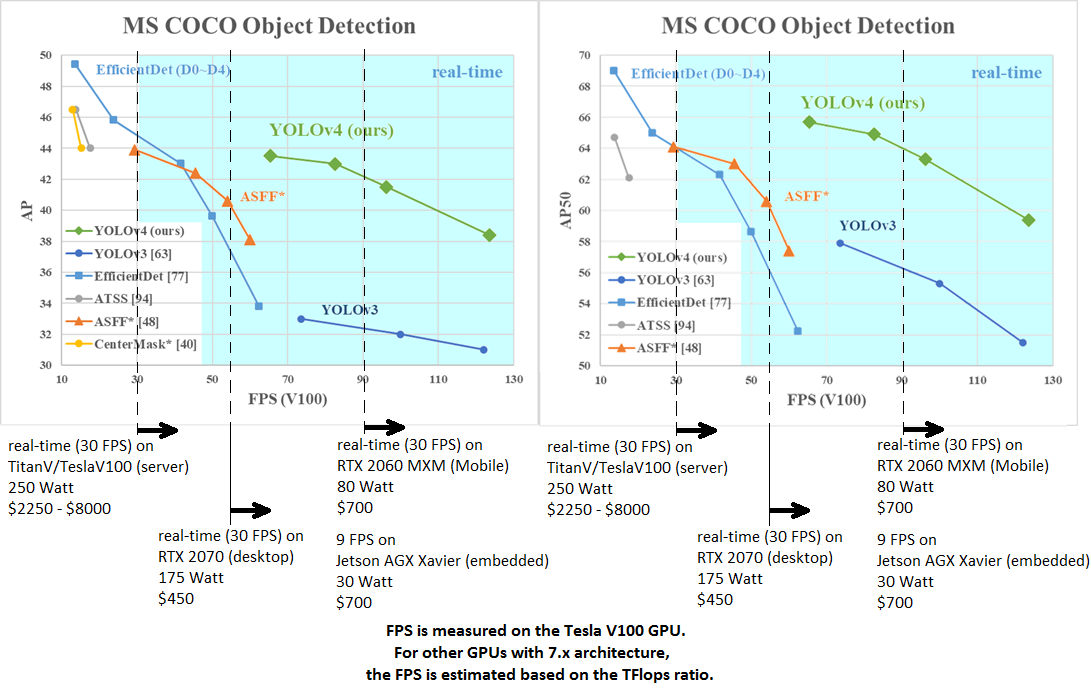

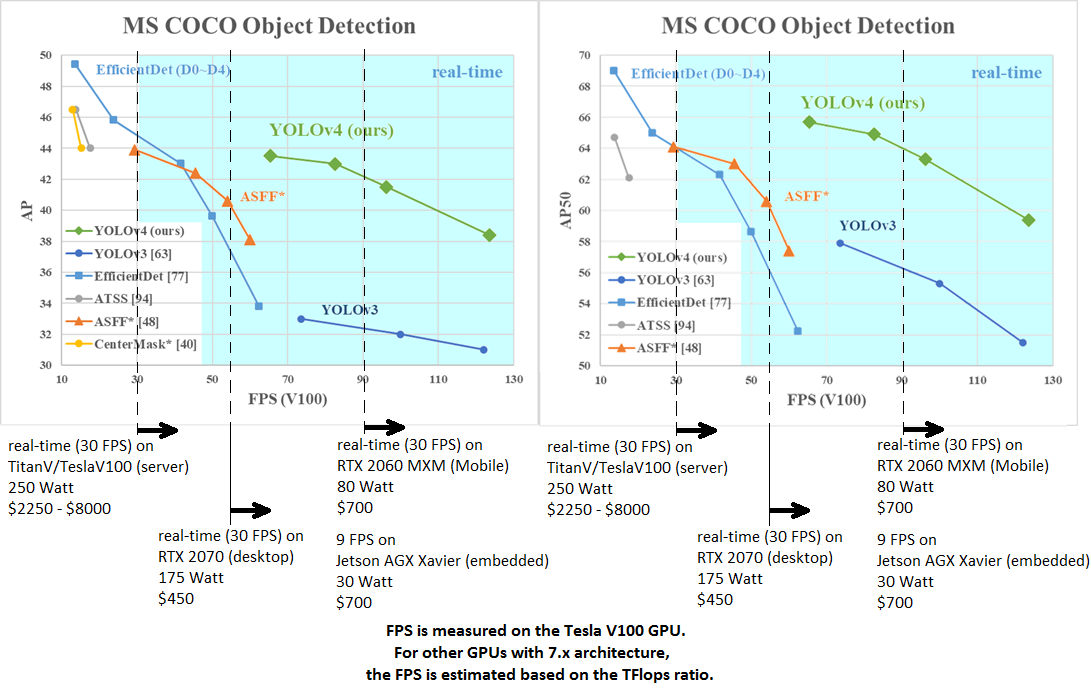

AP50:95 - FPS (Tesla V100) Paper: https://arxiv.org/abs/2011.08036

----

- AP50:95 / AP50 - FPS (Tesla V100) Paper: https://arxiv.org/abs/2004.10934

-

+ AP50:95 / AP50 - FPS (Tesla V100) Paper: https://arxiv.org/abs/2004.10934

tkDNN-TensorRT accelerates YOLOv4 **~2x** times for batch=1 and **3x-4x** times for batch=4.

-* tkDNN: https://github.com/ceccocats/tkDNN

-* OpenCV: https://gist.github.com/YashasSamaga/48bdb167303e10f4d07b754888ddbdcf

-

-#### GeForce RTX 2080 Ti:

-| Network Size | Darknet, FPS (avg)| tkDNN TensorRT FP32, FPS | tkDNN TensorRT FP16, FPS | OpenCV FP16, FPS | tkDNN TensorRT FP16 batch=4, FPS | OpenCV FP16 batch=4, FPS | tkDNN Speedup |

-|:-----:|:--------:|--------:|--------:|--------:|--------:|--------:|------:|

-|320 | 100 | 116 | **202** | 183 | 423 | **430** | **4.3x** |

-|416 | 82 | 103 | **162** | 159 | 284 | **294** | **3.6x** |

-|512 | 69 | 91 | 134 | **138** | 206 | **216** | **3.1x** |

-|608 | 53 | 62 | 103 | **115**| 150 | **150** | **2.8x** |

-|Tiny 416 | 443 | 609 | **790** | 773 | **1774** | 1353 | **3.5x** |

-|Tiny 416 CPU Core i7 7700HQ | 3.4 | - | - | 42 | - | 39 | **12x** |

-

-* Yolo v4 Full comparison: [map_fps](https://user-images.githubusercontent.com/4096485/80283279-0e303e00-871f-11ea-814c-870967d77fd1.png)

-* Yolo v4 tiny comparison: [tiny_fps](https://user-images.githubusercontent.com/4096485/85734112-6e366700-b705-11ea-95d1-fcba0de76d72.png)

-* CSPNet: [paper](https://arxiv.org/abs/1911.11929) and [map_fps](https://user-images.githubusercontent.com/4096485/71702416-6645dc00-2de0-11ea-8d65-de7d4b604021.png) comparison: https://github.com/WongKinYiu/CrossStagePartialNetworks

-* Yolo v3 on MS COCO: [Speed / Accuracy (mAP@0.5) chart](https://user-images.githubusercontent.com/4096485/52151356-e5d4a380-2683-11e9-9d7d-ac7bc192c477.jpg)

-* Yolo v3 on MS COCO (Yolo v3 vs RetinaNet) - Figure 3: https://arxiv.org/pdf/1804.02767v1.pdf

-* Yolo v2 on Pascal VOC 2007: https://hsto.org/files/a24/21e/068/a2421e0689fb43f08584de9d44c2215f.jpg

-* Yolo v2 on Pascal VOC 2012 (comp4): https://hsto.org/files/3a6/fdf/b53/3a6fdfb533f34cee9b52bdd9bb0b19d9.jpg

+

+- tkDNN: https://github.com/ceccocats/tkDNN

+- OpenCV: https://gist.github.com/YashasSamaga/48bdb167303e10f4d07b754888ddbdcf

+

+### GeForce RTX 2080 Ti

+

+| Network Size | Darknet, FPS (avg) | tkDNN TensorRT FP32, FPS | tkDNN TensorRT FP16, FPS | OpenCV FP16, FPS | tkDNN TensorRT FP16 batch=4, FPS | OpenCV FP16 batch=4, FPS | tkDNN Speedup |

+|:--------------------------:|:------------------:|-------------------------:|-------------------------:|-----------------:|---------------------------------:|-------------------------:|--------------:|

+|320 | 100 | 116 | **202** | 183 | 423 | **430** | **4.3x** |

+|416 | 82 | 103 | **162** | 159 | 284 | **294** | **3.6x** |

+|512 | 69 | 91 | 134 | **138** | 206 | **216** | **3.1x** |

+|608 | 53 | 62 | 103 | **115** | 150 | **150** | **2.8x** |

+|Tiny 416 | 443 | 609 | **790** | 773 | **1774** | 1353 | **3.5x** |

+|Tiny 416 CPU Core i7 7700HQ | 3.4 | - | - | 42 | - | 39 | **12x** |

+

+- Yolo v4 Full comparison: [map_fps](https://user-images.githubusercontent.com/4096485/80283279-0e303e00-871f-11ea-814c-870967d77fd1.png)

+- Yolo v4 tiny comparison: [tiny_fps](https://user-images.githubusercontent.com/4096485/85734112-6e366700-b705-11ea-95d1-fcba0de76d72.png)

+- CSPNet: [paper](https://arxiv.org/abs/1911.11929) and [map_fps](https://user-images.githubusercontent.com/4096485/71702416-6645dc00-2de0-11ea-8d65-de7d4b604021.png) comparison: https://github.com/WongKinYiu/CrossStagePartialNetworks

+- Yolo v3 on MS COCO: [Speed / Accuracy (mAP@0.5) chart](https://user-images.githubusercontent.com/4096485/52151356-e5d4a380-2683-11e9-9d7d-ac7bc192c477.jpg)

+- Yolo v3 on MS COCO (Yolo v3 vs RetinaNet) - Figure 3: https://arxiv.org/pdf/1804.02767v1.pdf

+- Yolo v2 on Pascal VOC 2007: https://hsto.org/files/a24/21e/068/a2421e0689fb43f08584de9d44c2215f.jpg

+- Yolo v2 on Pascal VOC 2012 (comp4): https://hsto.org/files/3a6/fdf/b53/3a6fdfb533f34cee9b52bdd9bb0b19d9.jpg

#### Youtube video of results

@@ -132,9 +133,9 @@ eval=coco

3. Get any .avi/.mp4 video file (preferably not more than 1920x1080 to avoid bottlenecks in CPU performance)

4. Run one of two commands and look at the AVG FPS:

-* include video_capturing + NMS + drawing_bboxes:

+- include video_capturing + NMS + drawing_bboxes:

`./darknet detector demo cfg/coco.data cfg/yolov4.cfg yolov4.weights test.mp4 -dont_show -ext_output`

-* exclude video_capturing + NMS + drawing_bboxes:

+- exclude video_capturing + NMS + drawing_bboxes:

`./darknet detector demo cfg/coco.data cfg/yolov4.cfg yolov4.weights test.mp4 -benchmark`

#### Pre-trained models

@@ -143,52 +144,52 @@ There are weights-file for different cfg-files (trained for MS COCO dataset):

FPS on RTX 2070 (R) and Tesla V100 (V):

-* [yolov4x-mish.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4x-mish.cfg) - 640x640 - **67.9% mAP@0.5 (49.4% AP@0.5:0.95) - 23(R) FPS / 50(V) FPS** - 221 BFlops (110 FMA) - 381 MB: [yolov4x-mish.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4x-mish.weights)

- * pre-trained weights for training: https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4x-mish.conv.166

+- [yolov4x-mish.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4x-mish.cfg) - 640x640 - **67.9% mAP@0.5 (49.4% AP@0.5:0.95) - 23(R) FPS / 50(V) FPS** - 221 BFlops (110 FMA) - 381 MB: [yolov4x-mish.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4x-mish.weights)

+ - pre-trained weights for training: https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4x-mish.conv.166

-* [yolov4-csp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4-csp.cfg) - 202 MB: [yolov4-csp.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-csp.weights) paper [Scaled Yolo v4](https://arxiv.org/abs/2011.08036)

+- [yolov4-csp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4-csp.cfg) - 202 MB: [yolov4-csp.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-csp.weights) paper [Scaled Yolo v4](https://arxiv.org/abs/2011.08036)

just change `width=` and `height=` parameters in `yolov4-csp.cfg` file and use the same `yolov4-csp.weights` file for all cases:

- * `width=640 height=640` in cfg: **66.2% mAP@0.5 (47.5% AP@0.5:0.95) - 70(V) FPS** - 120 (60 FMA) BFlops

- * `width=512 height=512` in cfg: **64.8% mAP@0.5 (46.2% AP@0.5:0.95) - 93(V) FPS** - 77 (39 FMA) BFlops

- * pre-trained weights for training: https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-csp.conv.142

-

-* [yolov4.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4.cfg) - 245 MB: [yolov4.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v3_optimal/yolov4.weights) (Google-drive mirror [yolov4.weights](https://drive.google.com/open?id=1cewMfusmPjYWbrnuJRuKhPMwRe_b9PaT) ) paper [Yolo v4](https://arxiv.org/abs/2004.10934)

+ - `width=640 height=640` in cfg: **66.2% mAP@0.5 (47.5% AP@0.5:0.95) - 70(V) FPS** - 120 (60 FMA) BFlops

+ - `width=512 height=512` in cfg: **64.8% mAP@0.5 (46.2% AP@0.5:0.95) - 93(V) FPS** - 77 (39 FMA) BFlops

+ - pre-trained weights for training: https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-csp.conv.142

+

+- [yolov4.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4.cfg) - 245 MB: [yolov4.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v3_optimal/yolov4.weights) (Google-drive mirror [yolov4.weights](https://drive.google.com/open?id=1cewMfusmPjYWbrnuJRuKhPMwRe_b9PaT) ) paper [Yolo v4](https://arxiv.org/abs/2004.10934)

just change `width=` and `height=` parameters in `yolov4.cfg` file and use the same `yolov4.weights` file for all cases:

- * `width=608 height=608` in cfg: **65.7% mAP@0.5 (43.5% AP@0.5:0.95) - 34(R) FPS / 62(V) FPS** - 128.5 BFlops

- * `width=512 height=512` in cfg: **64.9% mAP@0.5 (43.0% AP@0.5:0.95) - 45(R) FPS / 83(V) FPS** - 91.1 BFlops

- * `width=416 height=416` in cfg: **62.8% mAP@0.5 (41.2% AP@0.5:0.95) - 55(R) FPS / 96(V) FPS** - 60.1 BFlops

- * `width=320 height=320` in cfg: **60% mAP@0.5 ( 38% AP@0.5:0.95) - 63(R) FPS / 123(V) FPS** - 35.5 BFlops

+ - `width=608 height=608` in cfg: **65.7% mAP@0.5 (43.5% AP@0.5:0.95) - 34(R) FPS / 62(V) FPS** - 128.5 BFlops

+ - `width=512 height=512` in cfg: **64.9% mAP@0.5 (43.0% AP@0.5:0.95) - 45(R) FPS / 83(V) FPS** - 91.1 BFlops

+ - `width=416 height=416` in cfg: **62.8% mAP@0.5 (41.2% AP@0.5:0.95) - 55(R) FPS / 96(V) FPS** - 60.1 BFlops

+ - `width=320 height=320` in cfg: **60% mAP@0.5 ( 38% AP@0.5:0.95) - 63(R) FPS / 123(V) FPS** - 35.5 BFlops

-* [yolov4-tiny.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4-tiny.cfg) - **40.2% mAP@0.5 - 371(1080Ti) FPS / 330(RTX2070) FPS** - 6.9 BFlops - 23.1 MB: [yolov4-tiny.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-tiny.weights)

+- [yolov4-tiny.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov4-tiny.cfg) - **40.2% mAP@0.5 - 371(1080Ti) FPS / 330(RTX2070) FPS** - 6.9 BFlops - 23.1 MB: [yolov4-tiny.weights](https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v4_pre/yolov4-tiny.weights)

-* [enet-coco.cfg (EfficientNetB0-Yolov3)](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/enet-coco.cfg) - **45.5% mAP@0.5 - 55(R) FPS** - 3.7 BFlops - 18.3 MB: [enetb0-coco_final.weights](https://drive.google.com/file/d/1FlHeQjWEQVJt0ay1PVsiuuMzmtNyv36m/view)

+- [enet-coco.cfg (EfficientNetB0-Yolov3)](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/enet-coco.cfg) - **45.5% mAP@0.5 - 55(R) FPS** - 3.7 BFlops - 18.3 MB: [enetb0-coco_final.weights](https://drive.google.com/file/d/1FlHeQjWEQVJt0ay1PVsiuuMzmtNyv36m/view)

-* [yolov3-openimages.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-openimages.cfg) - 247 MB - 18(R) FPS - OpenImages dataset: [yolov3-openimages.weights](https://pjreddie.com/media/files/yolov3-openimages.weights)

+- [yolov3-openimages.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-openimages.cfg) - 247 MB - 18(R) FPS - OpenImages dataset: [yolov3-openimages.weights](https://pjreddie.com/media/files/yolov3-openimages.weights)

CLICK ME - Yolo v3 models

-* [csresnext50-panet-spp-original-optimal.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/csresnext50-panet-spp-original-optimal.cfg) - **65.4% mAP@0.5 (43.2% AP@0.5:0.95) - 32(R) FPS** - 100.5 BFlops - 217 MB: [csresnext50-panet-spp-original-optimal_final.weights](https://drive.google.com/open?id=1_NnfVgj0EDtb_WLNoXV8Mo7WKgwdYZCc)

+- [csresnext50-panet-spp-original-optimal.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/csresnext50-panet-spp-original-optimal.cfg) - **65.4% mAP@0.5 (43.2% AP@0.5:0.95) - 32(R) FPS** - 100.5 BFlops - 217 MB: [csresnext50-panet-spp-original-optimal_final.weights](https://drive.google.com/open?id=1_NnfVgj0EDtb_WLNoXV8Mo7WKgwdYZCc)

-* [yolov3-spp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-spp.cfg) - **60.6% mAP@0.5 - 38(R) FPS** - 141.5 BFlops - 240 MB: [yolov3-spp.weights](https://pjreddie.com/media/files/yolov3-spp.weights)

+- [yolov3-spp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-spp.cfg) - **60.6% mAP@0.5 - 38(R) FPS** - 141.5 BFlops - 240 MB: [yolov3-spp.weights](https://pjreddie.com/media/files/yolov3-spp.weights)

-* [csresnext50-panet-spp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/csresnext50-panet-spp.cfg) - **60.0% mAP@0.5 - 44 FPS** - 71.3 BFlops - 217 MB: [csresnext50-panet-spp_final.weights](https://drive.google.com/file/d/1aNXdM8qVy11nqTcd2oaVB3mf7ckr258-/view?usp=sharing)

+- [csresnext50-panet-spp.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/csresnext50-panet-spp.cfg) - **60.0% mAP@0.5 - 44 FPS** - 71.3 BFlops - 217 MB: [csresnext50-panet-spp_final.weights](https://drive.google.com/file/d/1aNXdM8qVy11nqTcd2oaVB3mf7ckr258-/view?usp=sharing)

-* [yolov3.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3.cfg) - **55.3% mAP@0.5 - 66(R) FPS** - 65.9 BFlops - 236 MB: [yolov3.weights](https://pjreddie.com/media/files/yolov3.weights)

+- [yolov3.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3.cfg) - **55.3% mAP@0.5 - 66(R) FPS** - 65.9 BFlops - 236 MB: [yolov3.weights](https://pjreddie.com/media/files/yolov3.weights)

-* [yolov3-tiny.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-tiny.cfg) - **33.1% mAP@0.5 - 345(R) FPS** - 5.6 BFlops - 33.7 MB: [yolov3-tiny.weights](https://pjreddie.com/media/files/yolov3-tiny.weights)

+- [yolov3-tiny.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-tiny.cfg) - **33.1% mAP@0.5 - 345(R) FPS** - 5.6 BFlops - 33.7 MB: [yolov3-tiny.weights](https://pjreddie.com/media/files/yolov3-tiny.weights)

-* [yolov3-tiny-prn.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-tiny-prn.cfg) - **33.1% mAP@0.5 - 370(R) FPS** - 3.5 BFlops - 18.8 MB: [yolov3-tiny-prn.weights](https://drive.google.com/file/d/18yYZWyKbo4XSDVyztmsEcF9B_6bxrhUY/view?usp=sharing)

+- [yolov3-tiny-prn.cfg](https://raw.githubusercontent.com/AlexeyAB/darknet/master/cfg/yolov3-tiny-prn.cfg) - **33.1% mAP@0.5 - 370(R) FPS** - 3.5 BFlops - 18.8 MB: [yolov3-tiny-prn.weights](https://drive.google.com/file/d/18yYZWyKbo4XSDVyztmsEcF9B_6bxrhUY/view?usp=sharing)

CLICK ME - Yolo v2 models

-* `yolov2.cfg` (194 MB COCO Yolo v2) - requires 4 GB GPU-RAM: https://pjreddie.com/media/files/yolov2.weights

-* `yolo-voc.cfg` (194 MB VOC Yolo v2) - requires 4 GB GPU-RAM: http://pjreddie.com/media/files/yolo-voc.weights

-* `yolov2-tiny.cfg` (43 MB COCO Yolo v2) - requires 1 GB GPU-RAM: https://pjreddie.com/media/files/yolov2-tiny.weights

-* `yolov2-tiny-voc.cfg` (60 MB VOC Yolo v2) - requires 1 GB GPU-RAM: http://pjreddie.com/media/files/yolov2-tiny-voc.weights

-* `yolo9000.cfg` (186 MB Yolo9000-model) - requires 4 GB GPU-RAM: http://pjreddie.com/media/files/yolo9000.weights

+- `yolov2.cfg` (194 MB COCO Yolo v2) - requires 4 GB GPU-RAM: https://pjreddie.com/media/files/yolov2.weights

+- `yolo-voc.cfg` (194 MB VOC Yolo v2) - requires 4 GB GPU-RAM: http://pjreddie.com/media/files/yolo-voc.weights

+- `yolov2-tiny.cfg` (43 MB COCO Yolo v2) - requires 1 GB GPU-RAM: https://pjreddie.com/media/files/yolov2-tiny.weights

+- `yolov2-tiny-voc.cfg` (60 MB VOC Yolo v2) - requires 1 GB GPU-RAM: http://pjreddie.com/media/files/yolov2-tiny-voc.weights

+- `yolo9000.cfg` (186 MB Yolo9000-model) - requires 4 GB GPU-RAM: http://pjreddie.com/media/files/yolo9000.weights