You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

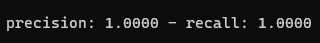

When I am training model = 1, these two values are always 1, is this correct?Which loss in the logs_edge.dat file should you pay more attention to when training model = 1?

The text was updated successfully, but these errors were encountered:

according to this the relevant and selective must have a value greater than zero to get result. I thing the edge you have generated had values below threshold. make sure edges to have only values of 0 and 1

When I am training model = 1, these two values are always 1, is this correct?Which loss in the logs_edge.dat file should you pay more attention to when training model = 1?

The text was updated successfully, but these errors were encountered: